If you design your testing set well enough and then don’t care about the accuracy of the output, then it’s not hard to get that kind of accuracy even without a brain scanner.

Well, that’s scary. Now we can have mind reading robots.

deleted by creator

I thought of 1984, but yeah

I thought of people with locked-in syndrome.

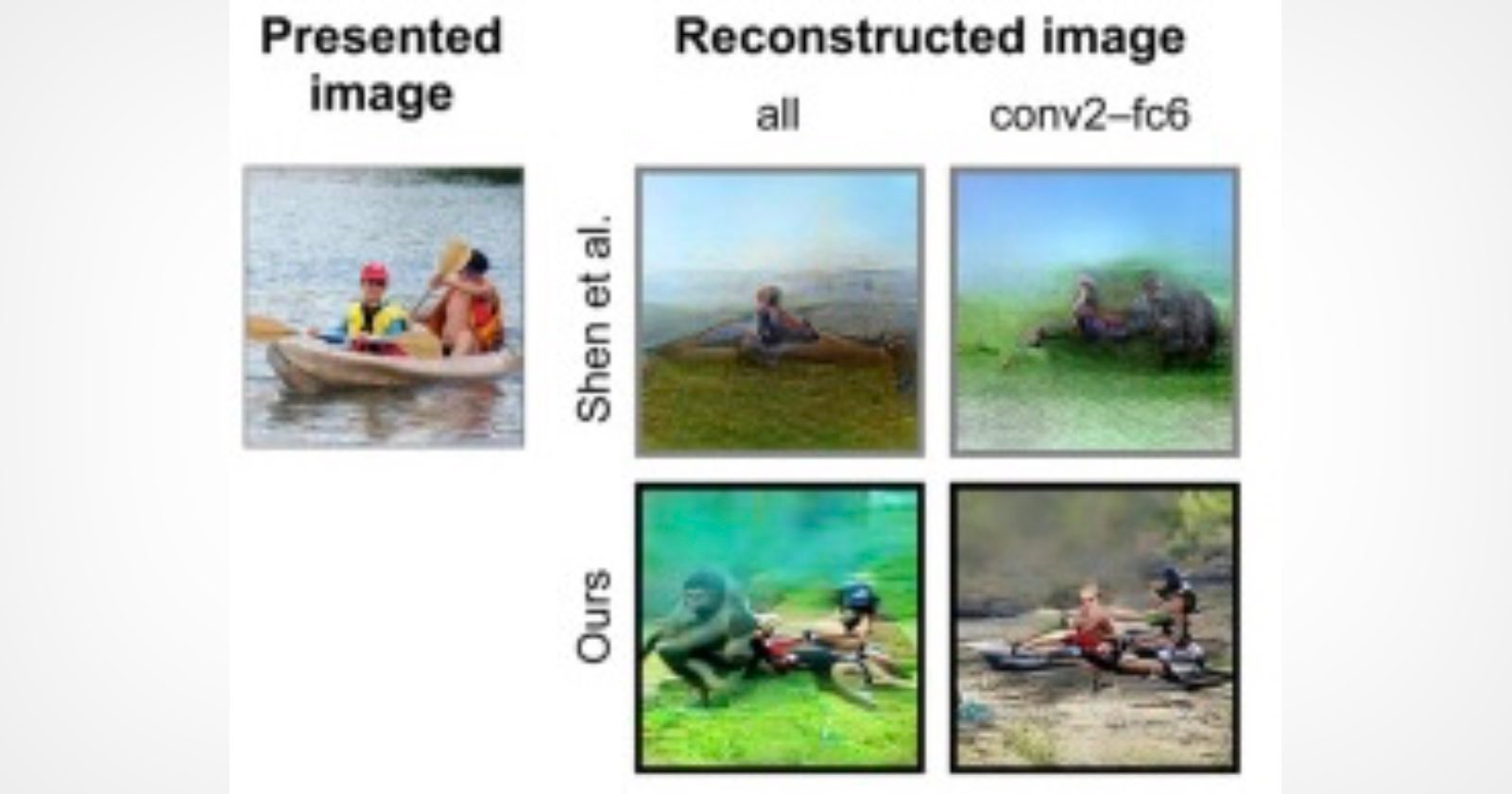

Idk what metric they’re using for “accuracy” but those images look sort of vaguely like the original at best

this season on Black Mirror, we re-write the first episode of Torchwood (the one where they briefly reanimate dead people to ask them how they died)

“You are detained, you have the right to remain silent, since everything you think can be used against you”

It’s not that impressive, they’re just generating ChatGPT prompts then feeding those into Stable Diffusion. It’s like peak AI grifting.

They’re hiding it behind academic language, but when you actually know how the underlying tech works, that’s what they’re doing.

Could you explain why this is a grift? The produced images in the example look scarily close to the original, whatever method they use to go from brainwaves -> image.

Because predictive texting and stable diffusion are both EXTREMELY easy to fudge data for. If you’ve ever used either you’ll realize it’s extremely hard to get it to do what you want and extremely easy to get it to do what it wants. All those high quality art renders you see are always texas sharpshoots where they try a bunch of random crap to see what looks good then say “Look at what it can do!” when it does.

But where exactly is the “fudging” happening?

the subjects were shown an image different from the 1,200, and their brain activity was measured under the fMRI 30 minutes to an hour later while asked to imagine what kind of image they had seen. Inputting the records, the neural signal translator then created score charts. The charts were input into another generative AI program in order to reconstruct the image, undergoing a 500-step revision process.

This sounds pretty straightforward. Even if the methods involve “fudging” and “throwing random crap at the wall”, what matters in the end is the accuracy of the results, as long as there’s no human-in-the-middle tweaking anything during each prediction.

Today’s not a good day for me so i’m not going to argue this any more, sorry.