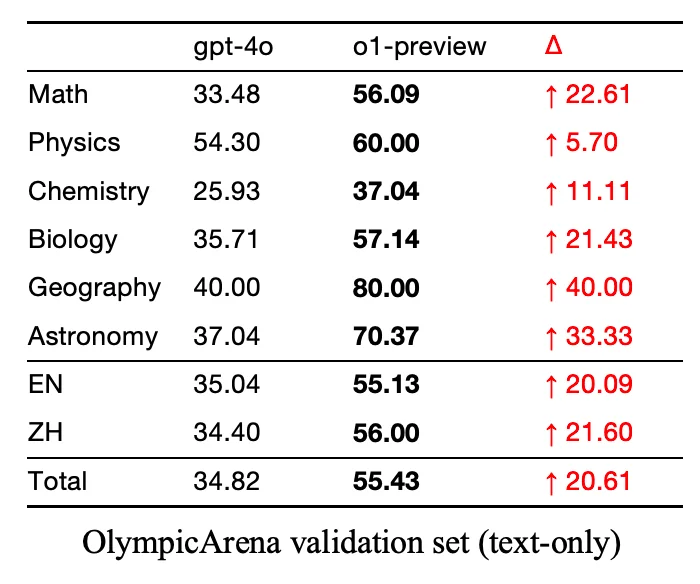

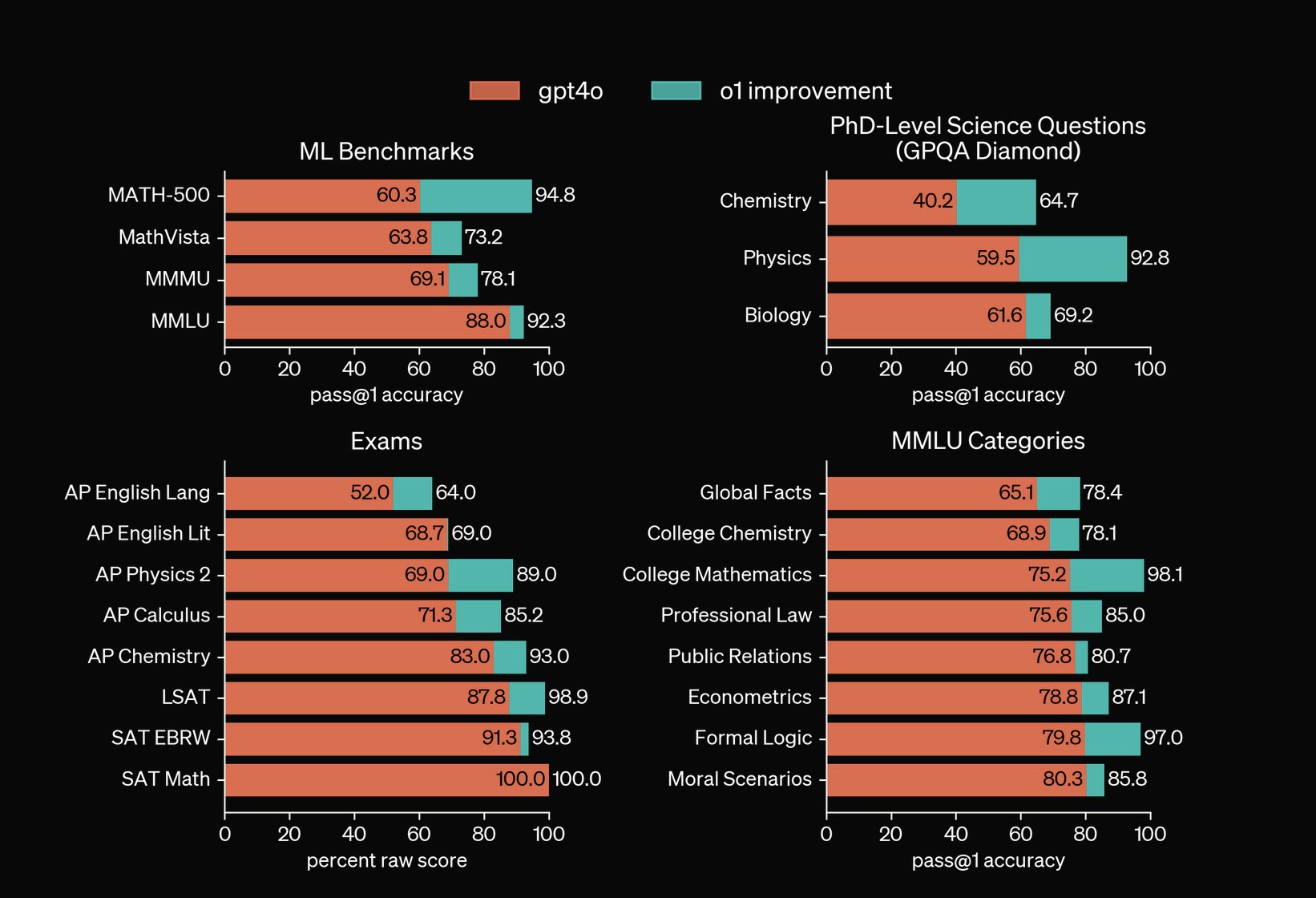

which does support the idea that there is a limit to how good they can get.

I absolutely agree, im not necessarily one to say LLMs will become this incredible general intelligence level AIs. I’m really just disagreeing with people’s negative sentiment about them becoming worse / scams is not true at the moment.

I doesn’t prove it either: as I said, 2 data points aren’t enough to derive a curve

Yeah only reason I didn’t include more is because it’s a pain in the ass pulling together multiple research papers / results over the span of GPT 2, 3, 3.5, 4, 01 etc.

Yeah 3.5 was pretty ass w bugs but could write basic code. 4o helped me sometimes with bugs and was definitely better, but would get caught in loops sometimes. This new o1 preview model seems pretty cracked all around though lol